Introduction

Searching in advertisements

Delpher contains millions of advertisements in digital form. However, querying for advertisements with a specific visual style or ads for a particular product is more cumbersome than one would expect. First, advertisements regularly contain logo’s or stylishly formatted text, which have not been probably identified by OCR-software during digitisation. This makes it difficult to locate ads for particular brands or products using full-text search. Second, a researcher does not always know all the relevant keywords related to a product in a particular time. Text mining techniques can help to extract significant keywords that express historic brand names or products. However, the brand name might not always appear as part of the OCR-ed text. How can we then find advertisements based on their visual features, and possibly detect trends in these visual features?

Tool

SIAMESE uses a Convolutional Neural Network (CNN) to identify similar visual trends in advertisements. A neural network comprises a set of layers of algorithms that work similar to how neurons work in our brains. They can filter out patterns in visual data, which can aid researchers in identifying visual trends. Researchers can analyse these visual trends or use them to dive deeper into the textual content of a specific group of advertisements to identify specific keywords related to products with a strong visual character. This is an approach similar to Yale’s Neural Neighbors project*.

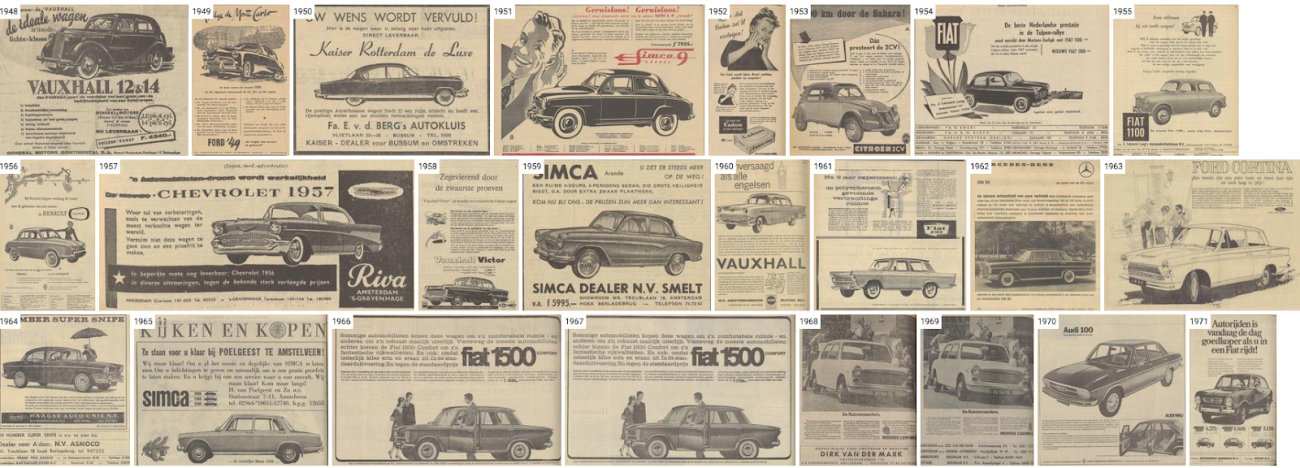

SIAMESE presents users with the ten most similar images to a source images and a timeline view consisting of the most similar image in every year between 1948 and 1995. Since the first three years after the war contained very few advertisements, we start at 1948. The ten most similar images to a source allows users to detect whether the source image was part of identifiable visual style. The timeline view allows users to study a visual trend’s development.

Dataset

Before we could use a CNN to look for similarities within images, we needed to create a dataset of advertisements that contain a high degree of visual content. Our starting dataset consisted of just over 1.6 million advertisements from two national newspapers: Algemeen Handelsblad (1945-1969) and NRC Handelsblad (1970-1994). The filtering comprised two steps. First, we removed images with a width or height smaller than 500px and advertisements with dimensions that resembled classifieds. Second, we removed image with a character proportion higher than 0.0005, which are advertisements that contained mostly text. Third, we removed 5,820 images that had copyright restrictions. This resulted in a dataset of 426,777 advertisements for the period 1945 - 1994.

For the second step, we used an open-source software library for machine intelligence, Tensorflow to reduce the images into vector representations. In other words, we identified abstract visual elements by which we could classify the images. We used the penultimate layer of the neural network to group pictures together on 2,048 visual aspects. In the penultimate layer, the visual elements are represented as vectors in a multidimensional space. We can look for images and their nearest neighbors based on the euclidean distance between these vectors in the space. Consequently, we can find images that share these abstract visual elements.

*For more on the underlying technique see: di Lenardo, I., Seguin, B., Kaplan, F. (2016). Visual Patterns Discovery in Large Databases of Paintings. In Digital Humanities 2016: Conference Abstracts.

When using this tool we request you cite it as follows:

Lonij, J., Wevers, M. (2017) SIAMESE. KB Lab: The Hague. http://lab.kb.nl/tool/siamese

Live demo

Instructions

Opening screen & searching

When a user first visits SIAMESE, the tool uses a random image as a source image. After clicking on the ‘random’ button, the user is presented with a different source image, which introduces a level of serendipity. The user can also paste a URL from Delpher associated with a particular ad from either Algemeen Handelsblad or NRC Handelsblad into the search field.

Navigating further

By clicking on one of the similar images, we feed this image as a source image, which facilitates further exploratory searching. Clicking on the year displayed for an image navigates the user to the advertisement in the Delpher interface. Here one can further explore the advertisement, its position in the newspaper, and the textual content. At the bottom of the page, the user can finds a random selection of images that can also be used as source images.

Examples

SIAMESE performs especially well in grouping clearly identifiable objects in advertisements. On the one hand, this is driven by the high number of advertisements for fashion (Fig. 1) and automobiles (Fig. 2). On the other, it also reveals that these advertisements for these products featured a consistent visual trend.

Figure 1. SIAMESE timeline view for fashion advertisements

Figure 2. SIAMESE timeline view for automobile advertisements

Other products which are heavily advertised in Dutch newspapers, such as mortgages or banks, rarely pop up as part of a visual style. This leads one to conclude that these do not have a very uniform visual trends throughout the years.

SIAMESE is also prone to recognise trends in layout. Advertisements that contain particular ways of framing text, or that feature a divergent width or height are grouped together. This does not always mean that the objects represented in the ads are similar.